Edge Caching and Distributed Computing for Millisecond Global Latency

In our global digital world, users expect instant responses. Delays mean lost engagement and revenue. This article explores how edge caching and distributed computing work together to achieve millisecond global latency, transforming user experiences and enabling high-performance applications.

The Imperative of Low Latency in a Globalized Digital Landscape

Latency, the delay between a user's action and an application's response, is critical. Over long distances, it leads to slow performance, frustrating users, and impacting business. The key is to bring data and computation closer to the user.

Slow page loads and application responsiveness.

Laggy real-time interactions (e.g., gaming, video conferencing).

Frustrated users and abandoned sessions.

Reduced SEO rankings and conversion rates.

Impaired operational efficiency for distributed teams.

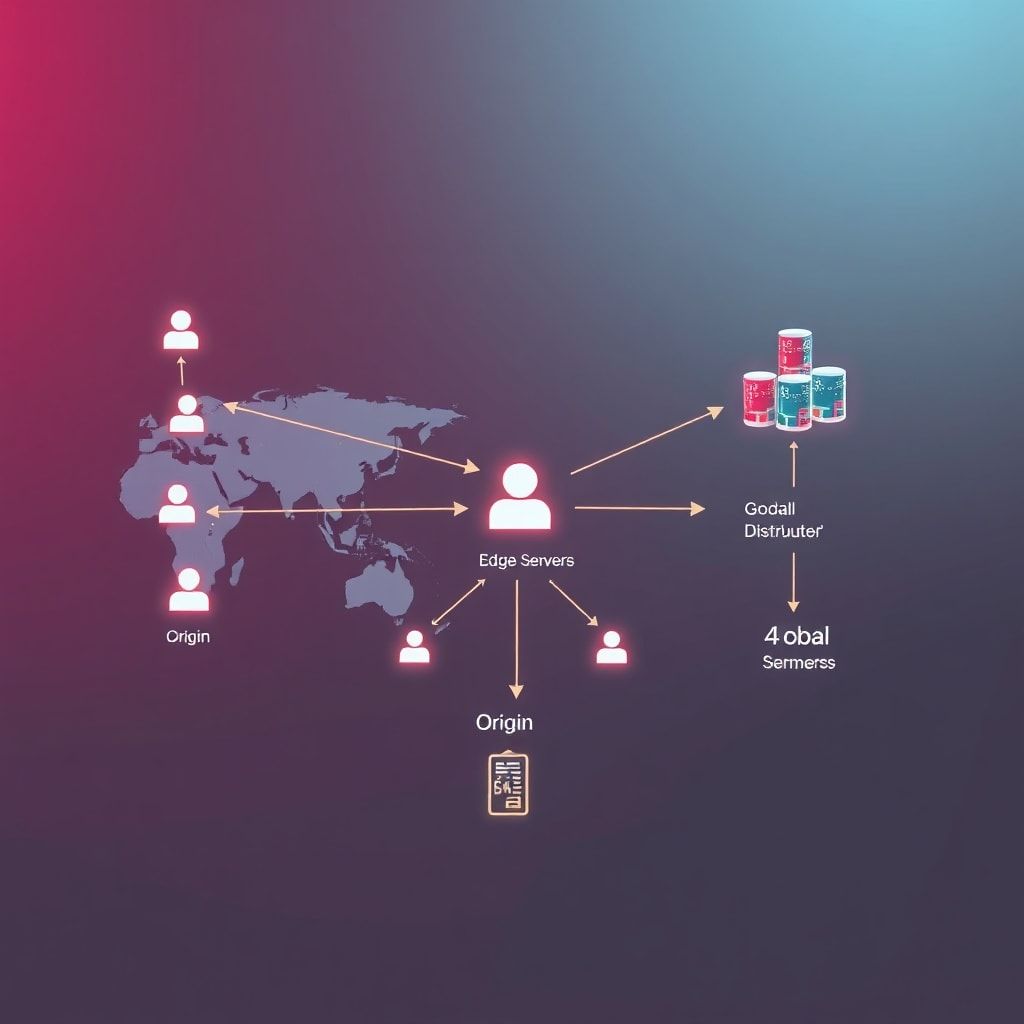

Edge Caching: Bringing Content Closer to the User

Edge caching stores copies of content at various "edge locations" (PoPs) worldwide, closer to users than origin servers. Content is served from the nearest edge, drastically cutting load times and latency.

How Edge Caching Works:

Consider a Content Delivery Network (CDN) as the most common implementation of edge caching:

A user in London requests an image from a server located in New York.

Without a CDN, the request travels across the Atlantic to New York, and the image travels back.

With a CDN, the request first hits a CDN PoP in London.

If the image is cached at the London PoP, it's immediately served to the user.

If not, the London PoP fetches it from the New York origin server, caches it, and then serves it to the user. Subsequent requests from London users for the same image will be served from the London PoP.

Benefits of Edge Caching:

Reduced Latency: Content is delivered from the nearest server, minimizing geographical distance.

Improved Performance: Faster loading times enhance user experience and engagement.

Reduced Load on Origin Servers: Offloading requests to edge servers reduces the burden on your central infrastructure, allowing it to handle more dynamic tasks.

Increased Availability and Reliability: If an origin server goes down, cached content can still be served. CDNs often offer DDoS protection.

Cost Savings: Lower bandwidth usage from origin servers can lead to reduced hosting costs.

While traditionally focused on static assets, modern edge caching increasingly handles dynamic content, API responses, and serverless functions at the edge, blurring the lines between content delivery and computation.

Distributed Computing: Scaling Processing Power Globally

Distributed computing uses multiple, geographically dispersed components that act as one system. It's essential for applications needing high availability, fault tolerance, and massive data/traffic processing without a single point of failure.

Key Principles and Architectures:

Decentralization: No single central authority; control and data are spread.

Concurrency: Multiple tasks can execute simultaneously on different nodes.

Resource Sharing: Hardware and software resources are shared.

Openness: The system is designed for easy extensibility and scalability.

Common Distributed Computing Paradigms Relevant for Low Latency:

Microservices Architecture: Breaking an application into independent services, deployable and scalable across regions.

apiVersion: apps/v1kind: Deploymentmetadata: name: user-service-europeEspec: replicas: 3 selector: matchLabels: app: user-service region: europe template: metadata: labels: app: user-service region: europe spec: containers: - name: user-service image: your-repo/user-service:1.0.0 ports: - containerPort: 8080 env: - name: DATABASE_URL value: "jdbc:postgresql://eu-db:5432/users"Serverless Functions (Function-as-a-Service - FaaS): Functions executed in response to events, without infrastructure management. Often leverages global edge networks for low latency.

// Example AWS Lambda@Edge or Cloudflare Workers functionaddEventListener('fetch', event => { event.respondWith(handleRequest(event.request))})async function handleRequest(request) { const url = new URL(request.url) if (url.pathname === '/api/hello') { return new Response('Hello from the Edge!', { status: 200 }) } return fetch(request) // Pass through to origin}Globally Distributed Databases: Databases storing data across multiple regions, offering multi-region replication and geo-partitioning for low-latency access and consistency.

Active-Active Replication: Data can be written to and read from multiple regions simultaneously.

Eventual Consistency: Data might not be immediately consistent across all replicas but will converge over time, suitable for global scale where immediate strong consistency would introduce unacceptable latency.

Container Orchestration (e.g., Kubernetes): Tools like Kubernetes manage distributed application deployment across various regions or hybrid environments.

The Synergy: Edge Caching Meets Distributed Computing

The real power for millisecond global latency comes from combining edge caching with distributed computing. Edge caching excels at static content. Distributed computing brings dynamic logic, data processing, and storage closer to the user, enhancing this.

Practical Scenarios for Synergy:

Edge AI/ML Inference: Deploy AI models or pre-processing logic to edge nodes for real-time inference, reducing data transfer and central load.

Real-time Personalization: Dynamically generate and cache user recommendations at the edge using serverless functions and nearby distributed databases.

Globally Distributed APIs and Microservices: Deploy API gateways and microservices regionally; CDNs route requests to the nearest instance for minimal latency.

Dynamic Content Generation and A/B Testing: Edge workers can assemble components, perform A/B testing, or generate dynamic content based on user context, reducing origin server reliance.

Implementation Strategies and Best Practices

Implementing an edge caching and distributed computing system requires careful planning.

1. Content Strategy and Caching Policies:

Identify Cacheable Content: Prioritize static assets, common API responses, and user-agnostic dynamic content.

Optimal TTLs (Time-To-Live): Balance freshness with caching efficiency using cache-control headers.

Edge Logic for Dynamic Content: Utilize Edge-SSIs or serverless functions at the edge for personalization or content assembly.

2. Distributed Architecture Design:

Microservices Granularity: Design services to be independent and regionally deployable.

Data Locality: Store data close to primary consumers/writers using globally distributed databases; consider eventual consistency.

Load Balancing and Routing: Implement intelligent global load balancing to direct users to the nearest healthy service instance.

Containerization and Orchestration: Use Docker and Kubernetes for consistent multi-region deployments.

3. Observability and Monitoring:

End-to-End Tracing: Monitor requests across edge, regional, and origin infrastructure.

Regional Metrics: Track performance, error rates, and resource utilization per region.

Real User Monitoring (RUM): Collect actual user experience data to validate performance.

4. Security at the Edge:

WAF (Web Application Firewall) at the Edge: Protect against common web exploits closer to the source.

DDoS Protection: Leverage CDN's network capacity to mitigate DDoS attacks.

API Security: Implement authentication, authorization, and rate limiting at the edge.

Data Encryption: Ensure data is encrypted in transit and at rest across all components.

5. Cost Optimization:

Bandwidth Savings: Reduce egress costs from origin by serving more from the edge.

Right-Sizing Compute: Scale regional compute resources independently based on local demand.

Managed Services: Utilize cloud provider managed services for databases and serverless functions.

Challenges and Considerations

Implementing edge caching and distributed computing comes with complexities:

Data Consistency: Maintaining strong consistency across geographically dispersed databases is challenging and can introduce latency; choosing the right consistency model is crucial.

Complexity: Designing, deploying, and managing distributed systems is inherently complex; debugging issues across multiple regions and services can be difficult.

Security Surface Area: More points of presence mean a larger attack surface; consistent security policies and monitoring are paramount.

Vendor Lock-in: Heavy reliance on specific CDN or serverless platforms can make migration difficult.

Cost Management: While often leading to savings, initial setup costs and managing distributed resources can be complex.

Future Trends and Innovations

The evolution of edge computing and distributed systems continues rapidly:

WebAssembly (Wasm) at the Edge: Enables high-performance, language-agnostic code execution directly at edge locations.

Advanced Edge AI/ML: More sophisticated AI models and federated learning pushing intelligence further to the edge.

5G Integration: Ultra-low latency and high bandwidth of 5G will amplify edge computing benefits for new applications.

Service Mesh Adoption: Increasing use of service meshes for communication, observability, and security in complex microservices.

Conclusion

Achieving millisecond global latency is essential for competitive digital products. By combining edge caching and distributed computing, organizations can deliver unparalleled performance and user experience worldwide. While complex, the benefits—from enhanced engagement and conversions to cost savings—make it an indispensable strategy for modern global applications. Embracing these technologies is key to unlocking a truly global digital presence.